As we are ready to close another decade of software development and testing, it is in my opinion also a great time to get rid of legacy and outdated terms and methods – in this short post, I would try and eliminate the term ROI and recommend replacing it with Value.

If up until few years quality management was trying to justify investments in testing via the ROI metric, it is now time for a change.

When implementing continuous testing, and running test automation cycles multiple times a day, in different environment, but different personas, ROI becomes an obsolete term since the measures are much different than before.

Objectives of Continuous Testing

Before convincing you to ditch the term ROI, let’s level set on the objectives of modern test automation and especially continuous testing.

From the agile testing manifesto, I am marking in bold the key values behind such testing.

- Continuous testing over testing at the end.

- Embracing all testing activities over only automated functional testing.

- Testing what gives value over testing everything.

- Testing across the team over testing in siloed testing departments.

- Product coverage over code coverage.

If to apply the above, the key goal from such testing is to identify business risks through high-value testing that is done by the entire team across the product and through the entire testing types (functional and non-functional).

In the above statements, there is no measure or quantification of anything but the VALUE of the tests.

The Key Pillars of Continuous Testing

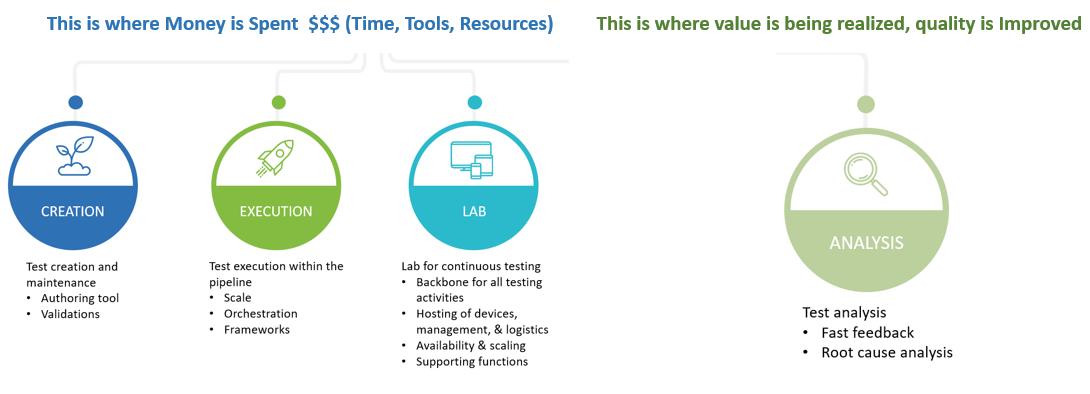

To achieve continuous testing organizations should focus on having the ability in house to Create test automation, Execute it at scale upon demand, in a solid Lab, and at the end of the day – Analyze the results using smart methods to make sense of the test results data.

If the above pillars are in line with organizations testing strategy and priority, it would make sense that the tools and techniques used to create and execute the tests will match accordingly.

The big questions here are – how do i justify any investment of $$ in the above pillars? what measures are relevant? who owns quality per each of these steps? what good looks like?

From ROI to Test Value

To address the above questions, let’s identify who is doing testing in today’s Agile and DevOps practices. As DevOps teams are divided into functional groups with test engineers and developers working hand in hand on specific features within iterations and sprints, there is no single person that really does testing. It is a feature team responsibility to deliver high quality and high value software. With that in mind, you will find business testers, developers, and test automation engineers working together and creating automated test scenarios as well as manual exploratory testing to meet their goals. While there might be a modern COE or quality leadership function that oversees the testing strategy within the organizations, determines budgets and tools, the actual work is really done within the teams.

If you are in agreement with me that Value is the most important thing in testing, let’s try and break Value into measures:

- # of flaky tests within cycle

- # of tests that repeatedly find defects

- # of tests that cause CI jobs to fail

- # of tests that fail broken down by root cause (Object ID, Lab, Coding skills, Platform state, etc.).

While there are be other metrics, the above ones clearly show if tests are actually living to their expectations of finding bugs, or are simply creating noise and software team wastes.

Looking at some market standards show that there is an average of 10-15 defects per KLOC (1000 lines of code) and 0.5 defects per KLOC that escapes to production. If to follow this number, it’s clear that the majority of defects that are found and reported today are noise vs. the REAL ones. To be successful in continuous testing requires a discipline and proper measurements of value to ensure that the majority of failed tests that are reported as bugs are indeed issues – the opposite will cause a mess within the entire DevOps team.

With that in mind, teams must acknowledge the fact that quality of testing and quality of product are a moment in time!, hence, you need to continuously measure and maintain it to get the actual state of the product.

How do I Realize Value Than?

To keep the long story short, there is only 1 place within the testing life cycle that can provide the single pane of glass of your overall testing activities value vs. waste – it is the TEST REPORT!

If you think about the entire life cycle of a test from the moment you write the code, through its debugging, execution and commit into the pipeline, the developer (whoever it may be), says good bye to the test the moment it “passed” on his environment. The reunion between the test owner and the test only happens in case the test fails in formal test cycles (can be CI, regression triggered by other event, etc.). This means, that there is a blind area from the moment the test was integrated into a suite up until it fails. There is no real reason to review a test if it passes other than being pleased about it (in case it is of course a high value test). Now, think about a suite of 1000 test cases with an average of 10% failures. This means that we now have 100 failed test scenarios that someone needs to review and report about. To add to this the above mentioned fact of 10-15 defects per KLOC, the reality would show that at least 80% of the tests are not real bugs. The team now have to deal with debugging of 80 test cases noise that may or may not add value to the product.

I think (and Hope) that by now the point is clear –> Measuring test automation Value starts with the above metrics, and with the notion of most test cases will not reveal critical bugs when running as regression for the 10x time. To understand what tests add value, what aren’t, and what are simply noise and poor practiced software engineering, you need proper test reporting and quality visibility into each and every domain of your test activities.

Bottom line – investing time, resources that is money should be made with the value add of these testing in mind. Old fashioned Pass/Fail per cycle are nice, but cannot keep up with today’s pace of technology, and therefore, requires a more diligent review of how tests behave in real time, over time, per platform, per functional area. And YES – do not get too attached to your test code, if it doesn’t prove itself after a short amount of time, simply Delete it. You can only assess if the tests bring value via your reporting.

The below visual breaks down the 4 pillars of continuous testing (CT) according to test investments vs. tests value realization.